In the first part of our blog series, we explored the definition, challenges and use cases of predictive maintenance. We will now show you why careful data preparation is important when it comes to accurate and reliable predictions. Predictive maintenance data comes with its own unique requirements which need to be considered. In this blog entry, you’ll get a deep dive into various methods for processing raw data, as well as effective data analysis techniques you need to predict your maintenance needs accurately. We will also let you know about which tools are best for cleaning and processing data, as well as for modeling.

The Best Way to Get Started with Your Data

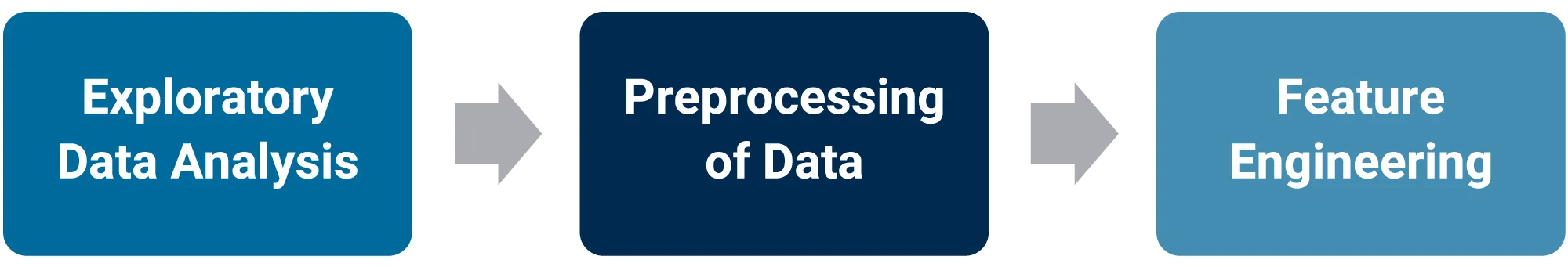

Data preparation is important for precise predictive maintenance models. Data quality and consistency directly influence the accuracy of your predictions. To help you, we will give you a structured overview of the main steps of an effective data preparation workflow. For a more detailed description of each phase in the data preparation process for machine learning, our comprehensive whitepaper provides detailed guidelines and best practices.

Exploratory Data Analysis (EDA)

Exploratory data analysis (EDA) is your first step in the data processing process. It helps you build a solid understanding of your data, recognize patterns and recognize correlations between properties. Various data visualizations and calculations, such as median or variance, are a good idea. This step usually shows that predictive maintenance data is imbalanced as errors, i.e. documented failures or faults, occur less frequently than normal cases. This imbalance must be addressed during model development. EDA also exposes data quality issues that must be cleaned or improved during data processing.

Data Preprocessing

During EDA, issues such as missing values or duplicate entries typically surface, often caused by rare events when gathering machine data. These must be corrected as part of data preprocessing. Another unique aspect of predictive maintenance data is that it’s usually time-series based; meaning specialized techniques are required for time sequencing and aggregation. A common and effective method is smoothing of the time series using a moving average. This reduces short-term fluctuations, making long-term trends, seasonal patterns and noise more visible within the data.

Normalization and scaling are an important part of data preprocessing. Normalization and scaling are an important to ensure real-time capabilities of the data and a direct and smooth integration into productive predictive systems. The exact approach depends on the data characteristics and prediction model selected. For example, you could carry out a scaling with data from a production machine equipped with sensors measuring temperature, pressure and vibration. Temperature might range from 20–100°C, while vibration ranges from 0–5 g (1g corresponds to 9.81 m/s²). Using min–max scaling normalizes all differing values to the same scale, ensuring each feature is equally considered by the model. The values are transformed in such a way that they are within a uniform area of 0 to 1.

Feature Engineering

Feature engineering is an important step when preparing data. It creates relevant features from raw data to boost the performance of a machine learning model. Existing data is transformed, new features are generated or irrelevant features are removed. An effective feature engineering is essential for better identifying specific patterns and trends in the data which are decisive when it comes to predicting failures or the state of machines. Temporal features are often created for predictive maintenance data as the data is time-series based, meaning that it often contains valuable temporal patterns and trends. Trends which occurred in the last hour/day/week can be recognized, as well as seasonal features, such as time of day/week/year. The length of time since the last failure or maintenance may also be of interest. Traditional statistical features also exist and these can be used instead of raw data to better recognize certain frequency patterns. You can use these to e.g. determine the mean, median, standard deviation and variance of the sensor values. Min and max calculations can also identify extreme values for information regarding future errors. It might also be a good idea to combine certain features, e.g. behavior of temperature-vibration when high temperatures correlate to high vibrations.

Predictive Analyses Methods for Maintenance Forecasting

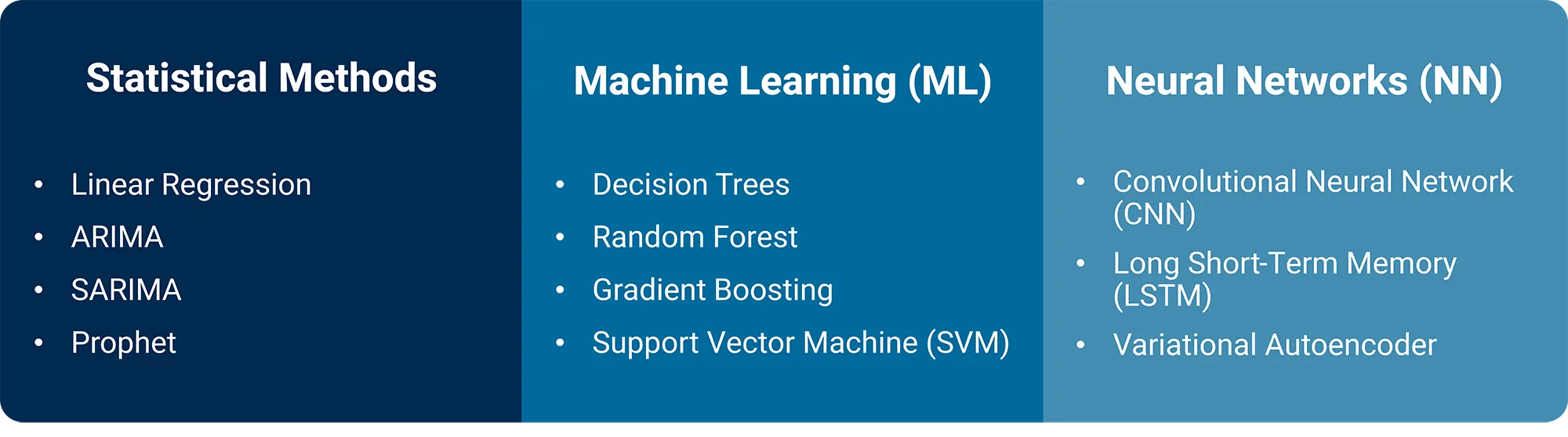

Once your data has been preprocessed and you have a basic understanding of it, you’re then ready to select a model for predicting future maintenance needs. The following will give you a structured overview of the most common prediction models. How to choose the right model for your use case and what steps follow afterward will be covered in detail in the next blog post of our series. For predictive maintenance data, you typically choose between statistical methods, traditional machine learning methods and neural networks.

- Statistical Methods: Statistical methods are built on clearly-defined assumptions about data structure and are characterized by their strong interpretability. They’re well-suited for time-series data with seasonal or trend-based patterns and are especially useful when clarity and efficiency are important for medium-sized datasets. However, because they generally assume linear relationships, statistical methods may struggle with complex, nonlinear patterns or large and irregular datasets.

- Machine Learning (ML): Machine learning is significantly more flexibility than statistical methods and can also recognize complex, nonlinear patterns, without needing strict assumptions about the data structure. This makes them ideal for applications with many features and complex dependencies. However, they often require extensive feature engineering to properly process time-series data. While they can deliver high accuracy, they may become prone to overfitting of training data, especially when dealing with strongly time-dependent patterns or very large datasets; this can then reduce their predictive performance.

- Neural Networks (NN): As a specialized subset of machine learning, neural networks excel at recognizing complex, nonlinear patterns in large datasets. They are optimized for time-dependent or sequential data and require less manual feature engineering because they can learn relevant patterns directly from raw data. Neural networks offer high flexibility and precision but are CPU-intensive, require longer training times and are often more difficult to interpret. They are not particularly suitable for small datasets or simpler tasks. They often produce the best results for complex predictions and anomaly detection.

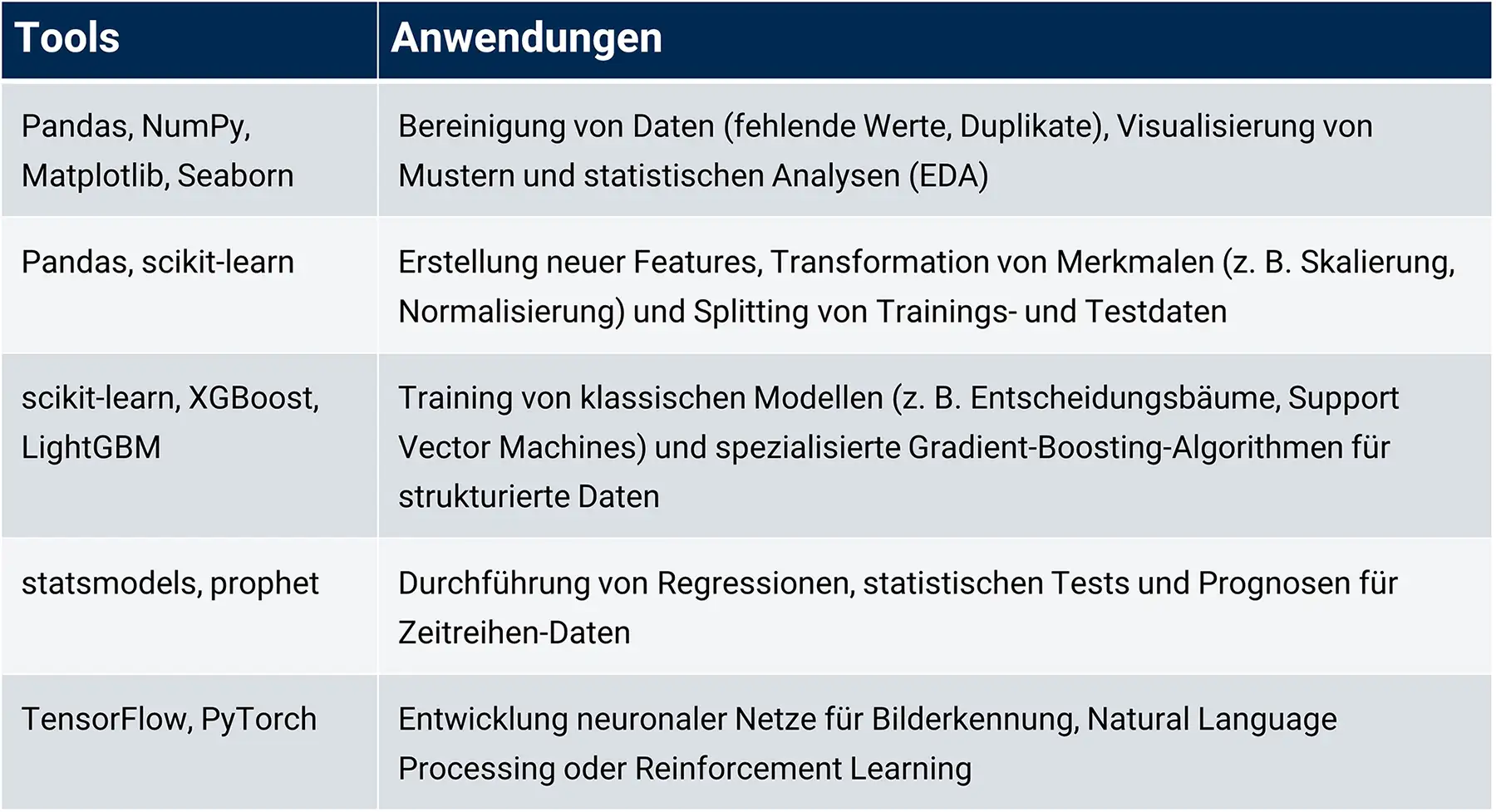

The Python stack provides a broad range of powerful libraries for data preparation and modeling. Choosing the right tool depends on your specific requirements and model complexity. The following graphic gives you an overview of which tools are best suited for which use cases.

Conclusion

Understanding your data, data preprocessing and feature engineering form the foundation for sound predictive maintenance results. Once you fully understand the characteristics of your data and have defined your objectives, you can begin selecting the method that best fits your needs.

In the final blog post of our series, we’ll guide you through selecting the method, training your model, evaluating results and rolling it all out.

Want to Unlock the Full Potential of Your Data?

Our data experts are here to support you in untapping valuable insights from a structured data processing. With the right data base, you can lay the foundations for precise analyses and powerful AI models. Check out our full data analytics portfolio now! We would be happy to help you whip your data processes into shape!